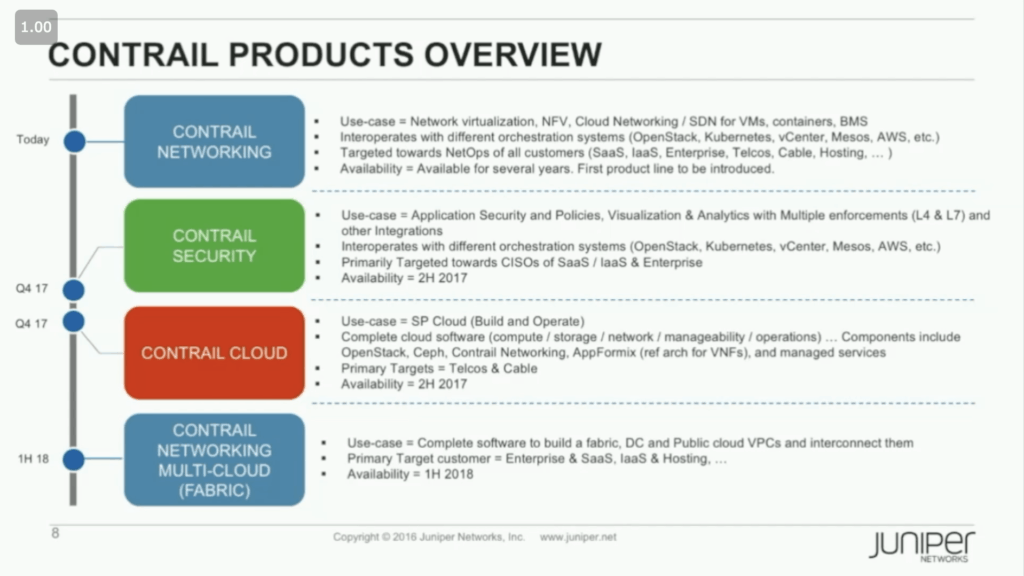

I recently had the privilege of hearing from the Juniper Networks Contrail product team at their offices in Sunnyvale. I was pleasantly surprised by the pragmatic and comprehensive approach they are taking in growing the product line. By the middle of this year (2018) there will be four distinct products; Contrail Networking, Contrail Security, Contrail Cloud, and the newest Contrail Multicloud.

As you can see in the above slide, Contrail Cloud is focused on building and operating a service provider (SP) cloud. While that is super interesting, this post is going to focus on building and operating a secure multicloud network fabric with the new Contrail Multicloud offering, which obviously builds on Contrail Networking and Contrail Security.

Re-Defining Core and Access

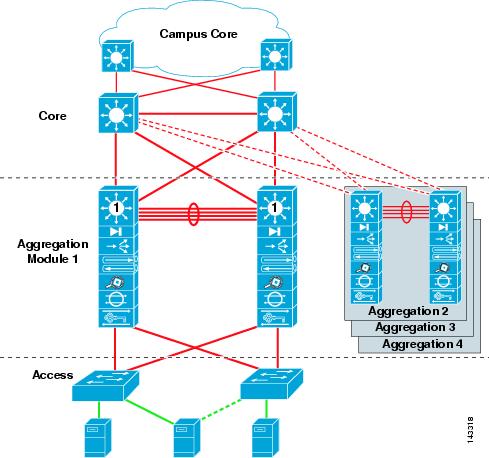

Before I dig into any specifics of the Contrail solution, let’s first explore a couple of key concepts that I think are driving the evolution of networking. Perhaps the most fundamental, and possibly most controversial trend that I see developing is a shift in our basic approach to network architecture. In the past, when we designed, built, and operated networks as a collection of devices (routers, switches, and firewalls) we defined our network architecture in terms of physical layers. The three-tiered Core, Aggregation/Distribution, and Access model is familiar to every network engineer. The Access layer is where compute, storage, and user devices connect into the network. The Aggregation/Distribution layer interconnects multiple access layer devices and is typically where routing, policy, load balancing and other network functions are applied between hosts, subnets, and VLANs. The Core layer interconnects the aggregation/distribution layer devices, the goal here is to move packets/frames, fast!

Server virtualization and new application frameworks have forced us to reconsider this model. Massive increases in East-West traffic, workload mobility, ephemeral workloads, and drastically reduced turn-up times (spinning up a VM takes much less time than racking a server) are some of the effects that have led us to a new model. Today a typical new network looks quite a bit different.

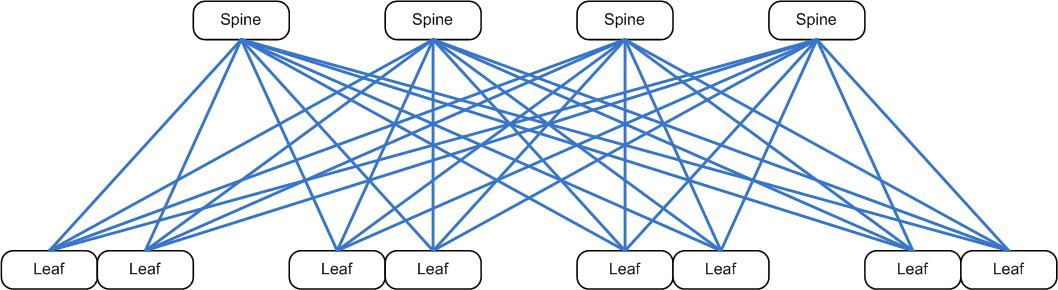

Instead of a multi-tier hierarchical design, we have found folded-Clos (spine-leaf) networks much more efficient at moving large quantities of packets from anywhere to anywhere.

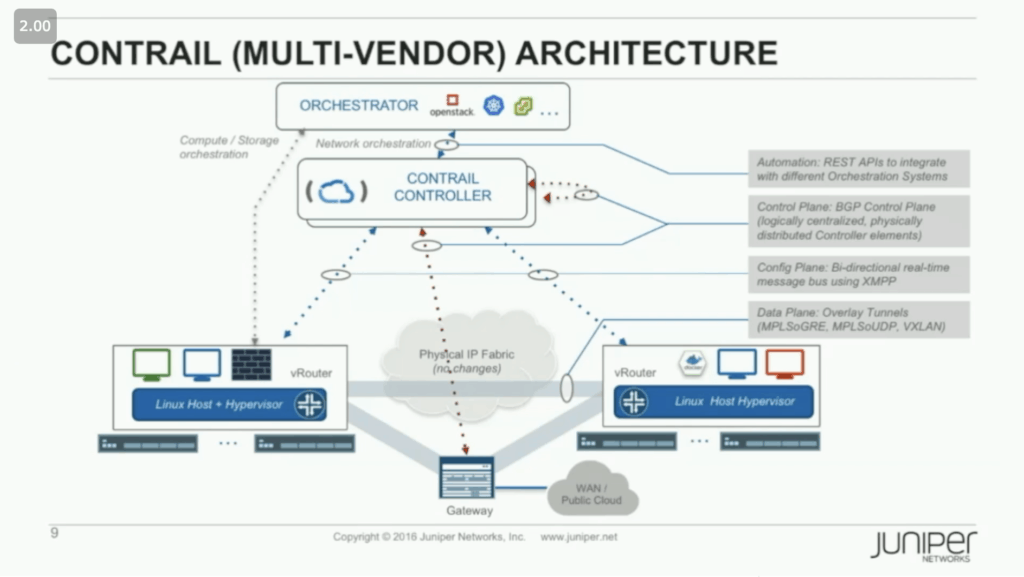

In order to keep up with the speed of virtualized compute and storage, we’ve adopted virtualized networks that run as an overlay (with the physical Clos network becoming an underlay).

Overlays are not new to networking, just think of all those physical vs logical diagrams you’ve had to draw. We overlay Ethernet networks over physical networks and IP networks over Ethernet networks. We’ve used VLAN tags, MPLS labels, and GRE tunnels to create logical topologies that don’t line up with physical (or even other logical) connections.

What is new here is that we have moved the overlay completely off of traditional network devices. Using Contrail as the example at hand, you can see in the slide below that there is now a software controller communicating with software vRouters, all of which live on common compute platforms – not routers or switches.

OK, you already know all this, stick with me, here’s where it get’s interesting.

If we look at the underlay fabric, we realize that it looks just like the internal architecture of any multi-chip switch. The spine switches take the place of the fabric chips, and the leaf switches act as the network processors (Juniper’s Q5 chipset in the below example).

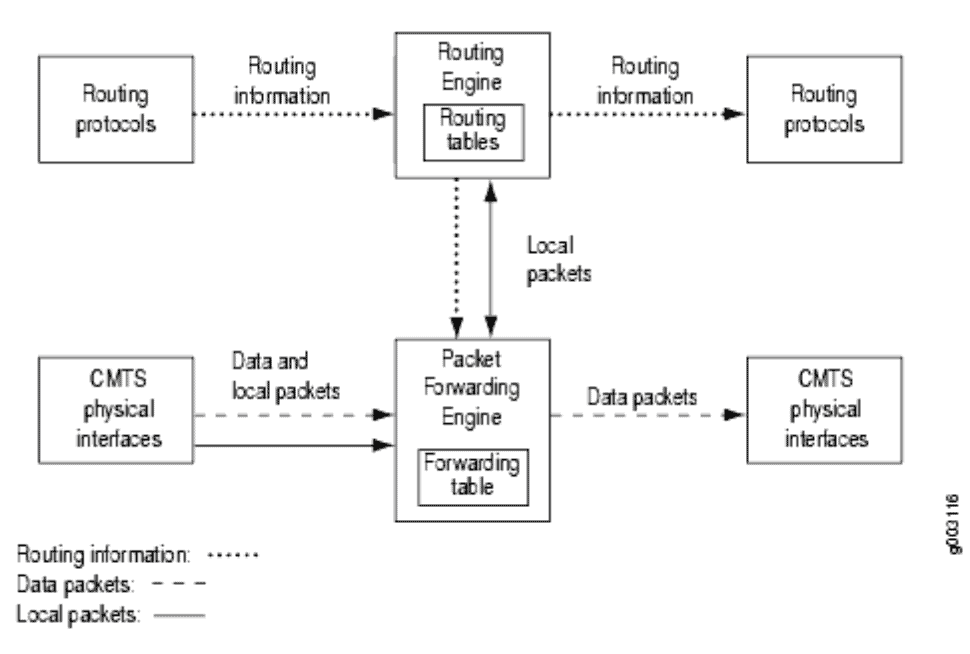

Likewise, we can think of the overlay as a software-only fully-distributed router. The controller is like a Routing Engine (supervisor) taking care of all the control (and management) plane duties. The “virtual ToR” software edge device (vRouter/vSwitch/etc) is like the Packet Forwarding Engine (line card), which handles the data plane duties. This includes forwarding, reporting, and enforcing.

Now you’re thinking: Chris, I get it, a Clos underlay looks like a switch and an “SDN” overlay looks like a router, so what?

I’m glad you asked!

Visualizing the network in this way gives us a new 2-tier model. Instead of trying to conceptualize the physical network into an outdated hierarchy, we can now look at the entire logical network platform as a two tier system. The underlay is the Core layer switch and the overlay is the Access layer router. This is super helpful when we want to decide where network functions should live. The Core is still there to move packets, fast, and the Access is there to handle routing and policy as well as to provide additional features and functions.

Boom. Mind blown… Right?

Contrail Security

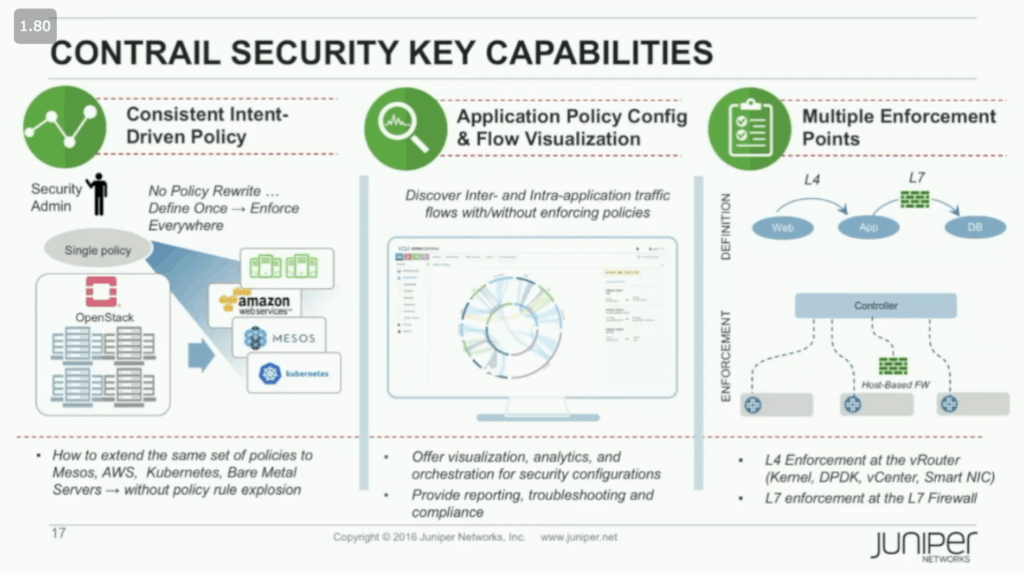

A great advantage of this type of overlay network comes from server virtualization (through VMs or Containers) moving the network edge into our compute hosts. A single physical server interface is actually shared by multiple applications – the only way to truly connect one workload to one access port is to put those ports inside the host. By putting a vRouter on the host (or on a NIC), Contrail Security takes advantage of being a distributed access router by moving policy enforcement as close to the workload as possible. What’s more, you can easily re-route targeted traffic to a full-blown L7 firewall (or to any other device) as needed. Just like a physical router has no problem sending any traffic to any port, Contrail can do the same. The difference here is that those ports can be anywhere, because there is no physical chassis to limit interface location. This allows for rich service chaining across the entire fabric.

This ability for multiple enforcement points is one of the three pillars of Contrail Security. The other two being an evolved intent-driven policy framework and enhanced flow visualization capabilities.

The key to Contrail Security’s evolved policy framework is that it uses application attributes, not network coordinates. This eliminates policy rule sprawl (explosion as they called it) by making policy definitions fungible. It also decentralizes the expression of policy intent, allowing developers to apply policy, not just centralized security administrators – don’t worry, you can still define global policies as needed.

As you might expect, real-time visualization of traffic flows is included. What’s interesting is that the visualization is application centric, allowing you to understand your security posture as it relates to the application topology, not the network topology. In addition to data collected from the vRouters, Contrail’s collector can ingest sflow, netflow, ipfix, google protocol buffers, and snmp, providing rich information from all types of physical devices as well, which leads us to…

Contrail Multicloud

What if you could take this idea of a single, distributed software-only access layer router and apply it across all the various environments where your workloads live?

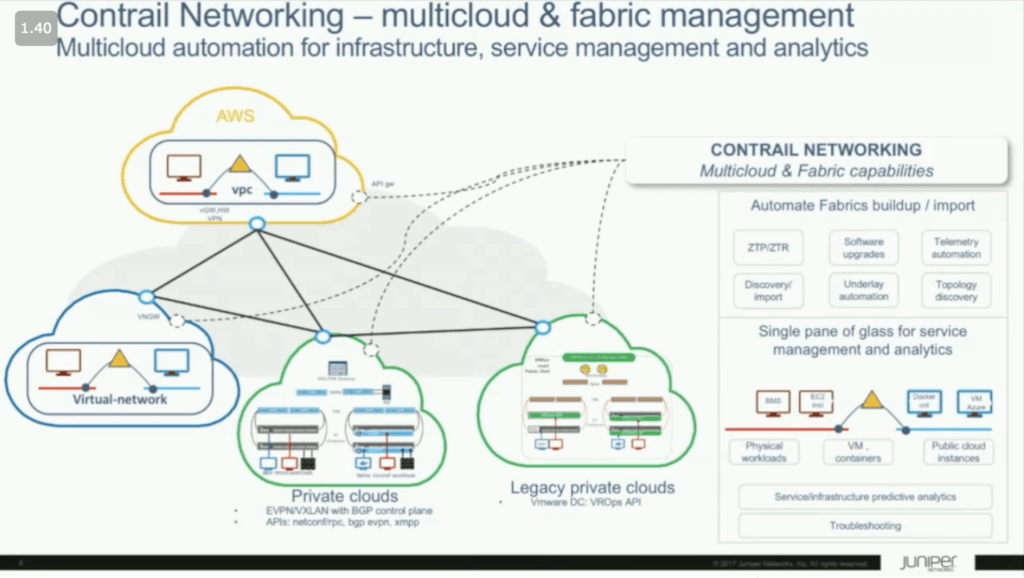

You guessed it, that is exactly what Contrail Multicloud aims to do. By speaking the native language within each of these environments (APIs for public cloud and NETCONF & BGP for physical devices) and abstracting that behind a single pane of glass, Contrail Multicloud can interconnect your multitude of separate underlay fabrics into a single, secure network platform.

Of course, I could never do as good a job describing Contrail, and specifically Contrail Multicloud, as the folks who are managing the product line at Juniper networks. Instead of trying, I’ll just let you watch the videos of their Network Field Day 17 presentations:

^Aniket Daptari, Product Line Manager at Juniper Networks, gives the delegates a historical overview of the Contrail platform. He then reviews how the company will expand it going forward, including uses for multicloud networking.

^Aniket Daptari, Product Line Manager at Juniper Networks, reviews how security is handled within the Contrail platform. He reviews this policy framework and how it is designed to leverage application attributes, rather than simply network coordinates.

^Jacopo Pianigiani, Director, Product Management at Juniper Networks, reviews how Contrail handles fabric management when working with multicloud. This is designed to simplify and normalize in order to provide a usable view of networking as a service in cross cloud environments.

[…] Secure Multicloud Networking with Contrail […]

[…] that only a couple companies seem poised to really deliver on. I’ve written previously about Juniper’s approach, which they are now calling “Secure Automated Multicloud.” Their advantage, of course, […]