I’ve been watching the AI infrastructure chip market for a while now, and it has been, to put it gently, a fairly consolidated landscape. Broadcom has owned the switch silicon conversation for years with Tomahawk, Trident, Jericho — and on the DPU/SmartNIC side, it’s largely been Nvidia Bluefield, AMD/Pensando, and Intel IPU trading punches. So when a fabless startup walked into AI Infrastructure Field Day 4 and said “we’ve rethought the architecture from scratch, and the chips are already shipping,” I leaned in. That company was Xsight Labs, and they presented two genuinely interesting products — the X-Series Ethernet switch chip and the E-Series DPU — with a philosophy around openness and programmability that deserves some attention.

The Problem with Fixed Pipelines

The networking silicon market has long rewarded performance and scale above all else. That’s how you end up with Broadcom’s dominance — their chips are fast, well-supported, and everywhere. But that dominance has come with a price: fixed-function pipelines. The way traditional Ethernet switch ASICs work is simple in concept; packets enter, flow through a series of predefined processing stages, and exit. Customization happens at the configuration layer, not at the forwarding logic layer. A newer variation, sometimes called the “mapped pipeline” approach, lets vendors program which features run in which pipeline stage, but the pipeline itself is still fixed hardware. Remapping due to bug fixes or new features can fail, and adding new capabilities often means waiting for the next chip generation. In an AI networking world where protocols, congestion management schemes, and fabric architectures are all still evolving rapidly, that rigidity is a real problem.

Xsight Labs: Two Chips, One Philosophy

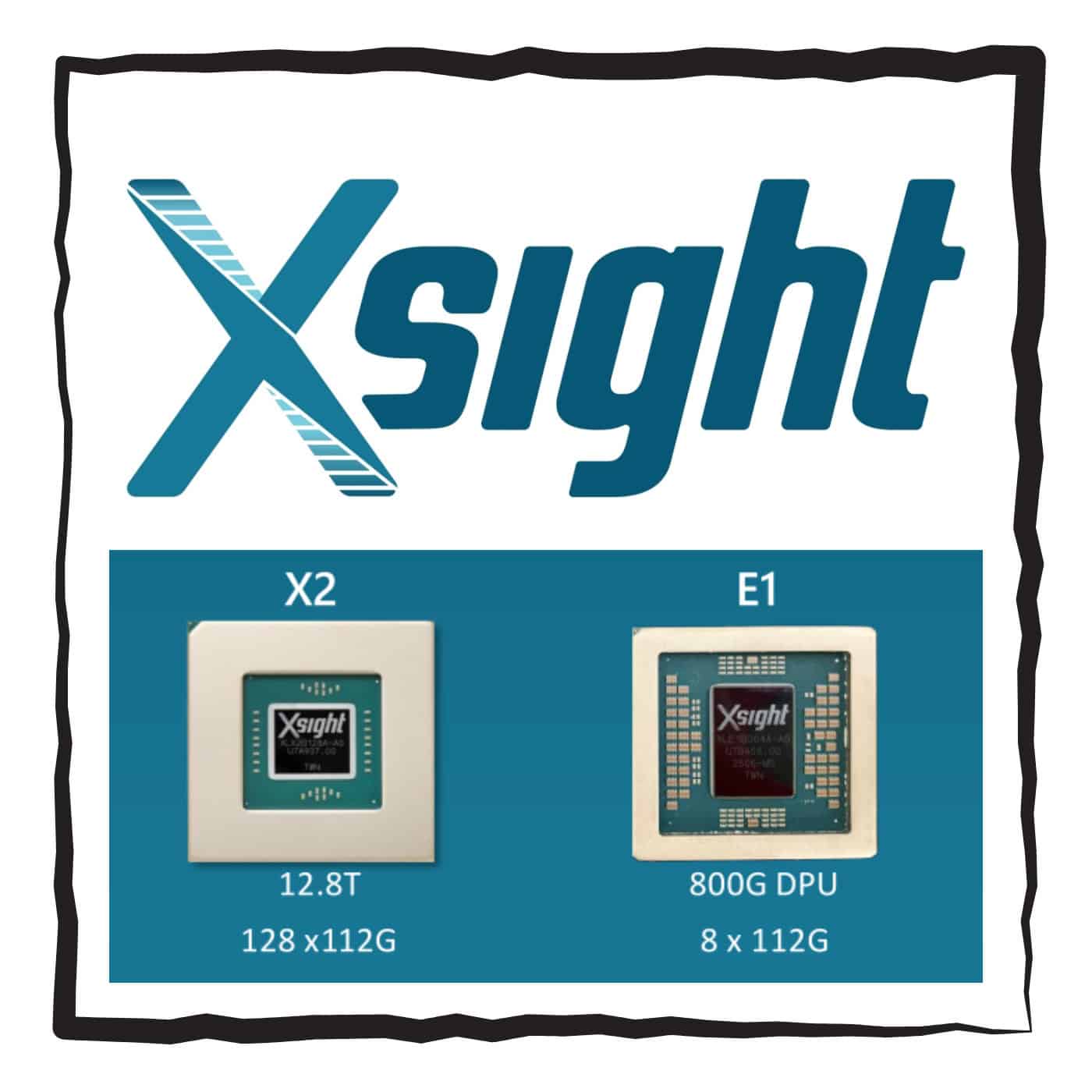

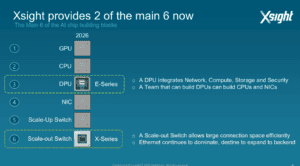

Xsight Labs is a fabless semiconductor company founded in 2017, with about 260 people, $440M raised, and, critically, two chips actually shipping today. Their X2 is a 12.8T Ethernet switch, and their E1 is an 800G DPU. The team has serious pedigree: alumni from Marvell, Mellanox, Annapurna Labs (AWS Nitro), Habana Labs, and Cisco Silicon One. Avigdor Willenz (the man who pioneered merchant silicon switches with Galileo Technology and has since helped build companies sold to Marvell, Amazon, Cisco, and Intel) is the chairman. These aren’t newcomers to silicon; they’re people who’ve shipped silicon before at scale and are taking another swing with a specific architectural thesis: both of their chips should be truly software-defined, open, and programmable without performance compromises.

What Programmability Actually Means Here

This is where Xsight’s story gets interesting. The X2 switch chip is not a fixed pipeline, and it’s not a mapped pipeline. It uses 3,072 small Harvard-architecture cores running in a run-to-complete model. Instead of forcing your logic to fit a predefined sequence of processing stages, you write code that runs to completion for each packet; with real recursion, parallel operations, and the flexibility to handle any protocol or header structure. Latency comes in around 450 nanoseconds, competitive with fixed-function silicon, at under 200 watts for 12.8T. That last number is striking: Xsight claims competitors running similar bandwidth are well north of 300W, some over 600W. They’ve also fully open-sourced the Instruction Set Architecture (XISA), and Oxide Computer, an early ecosystem partner, wrote a P4 compiler directly on top of it.

The E1 DPU takes an equally interesting contrarian stance. Where the dominant DPU designs started as high-performance NICs and bolted on ARM cores for control-plane exceptions, Xsight started from the ARM server side and worked toward the network. The result is 64 ARM Neoverse N2 cores with full coherent fabric, DDR5 memory, and PCIe Gen5 — sized so the entire data plane can run in software using standard Linux programming models. No proprietary pipelines, no split-programming-model headaches. Hammerspace, a storage software company, chose the E1 for an AI flash storage deployment, and cited simplicity over competing DPUs (Nvidia, AMD, Intel) as a key factor.

The Catch: Flexibility Requires Investment

Here’s the honest tradeoff worth naming. All of this programmability is real, but it doesn’t come for free. With a fixed-function switch chip, the vendor ships the features. With the X2, the power is in your hands, which means the responsibility is too. Oxide had to build their own P4 compiler to unlock the X2’s architecture; that’s not a trivial undertaking. Xsight does provide extensive SDKs, a SONiC distribution, and a behavioral model for simulation, and the E1 runs any standard ARM Linux distribution unmodified. But the ceiling of what’s possible scales with what you bring to it in software. For teams with deep networking engineering talent, this is a feature. For teams accustomed to buying a black-box switch and loading a vendor NOS, it’s a different kind of engagement. Xsight’s bet is that the industry is ready to make that investment, and the ecosystem they’re building around open tooling suggests they know it won’t happen overnight.

Competition Makes Everyone Better

The switch ASIC market has needed more competition for a while. A single dominant vendor, however excellent, produces a kind of inertia; protocols and architectures get designed around what that vendor supports, and the feedback loop reinforces itself. Xsight Labs doesn’t fix that overnight, but they represent something the market needed: a well-funded, well-pedigreed team with chips in production that take programmability seriously at the architecture level. The SpaceX Starlink V3 deployment (each satellite handling more than 1 Tbps of fronthaul throughput) is a real-world proof point that isn’t just a lab demo. For anyone building AI infrastructure, or just thinking about what comes after the current generation, Xsight Labs is worth watching closely.

Xsight Labs presented at AI Infrastructure Field Day 4 in January 2026. For a deeper technical dive into the X-Switch architecture, Wheeler’s Network published a solid white paper on the open ISA. Coverage of the Starlink V3 win from ServeTheHome is also worth a read.